Why this matters: Mastering agents is the key to building AI systems that can actually *do* things in the real world.

Attention Activity: The Illusion of Action

What happens when you ask an AI to interact with the real world, like booking a flight? Let's observe the difference between passive and active AI.

Passive LLM

Agent LLM

The passive model only generates words. The Agent plans a sequence of steps, executes an external tool, and observes the result.

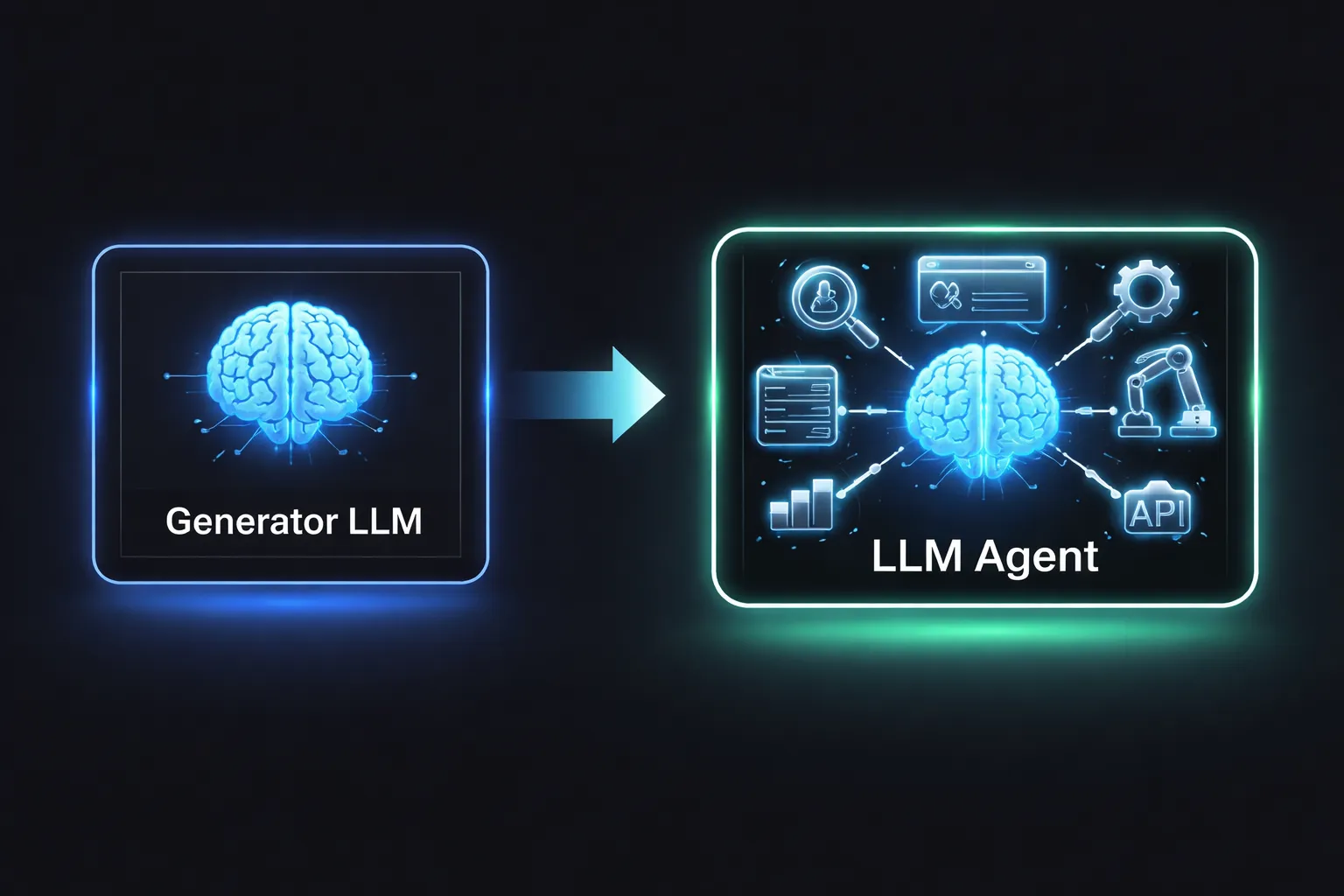

From Generators to Agents

Traditional Large Language Models (LLMs) take an input prompt and predict the next word. They are closed boxes, relying entirely on static training data.

LLM Agents break out of the box. By giving the LLM access to external tools, it evolves into an active participant. The model becomes the "cognitive engine" routing logic and data.

The Execution Loop (ReAct)

How do agents actually "think"? They rely on cognitive architectures, the most famous being the ReAct (Reason + Act) loop. The agent iterates through a cycle until its overarching goal is met.

1. Reason: The LLM analyzes the goal and decides on a plan.

2. Act: The LLM executes an external tool (like searching the web).

3. Observe: The LLM reads the result from the tool and feeds it back into the reasoning step.

Knowledge Check

In the ReAct execution loop, what does the agent do during the "Observe" phase?

Function & Tool Calling Mechanics

How does a text generation model physically "use" a tool? Through a mechanism called Function Calling.

Developers provide the LLM with a list of available tools and their required data formats (schemas). When the agent decides to act, it doesn't run code itself; it generates a highly structured text object (usually JSON).

The surrounding software architecture catches this JSON, executes the actual API request or database query, and hands the raw data back to the LLM as an observation.

Task Decomposition

For complex goals, agents perform Task Decomposition. They break a massive problem down into smaller, sequential steps, handling one micro-task at a time.

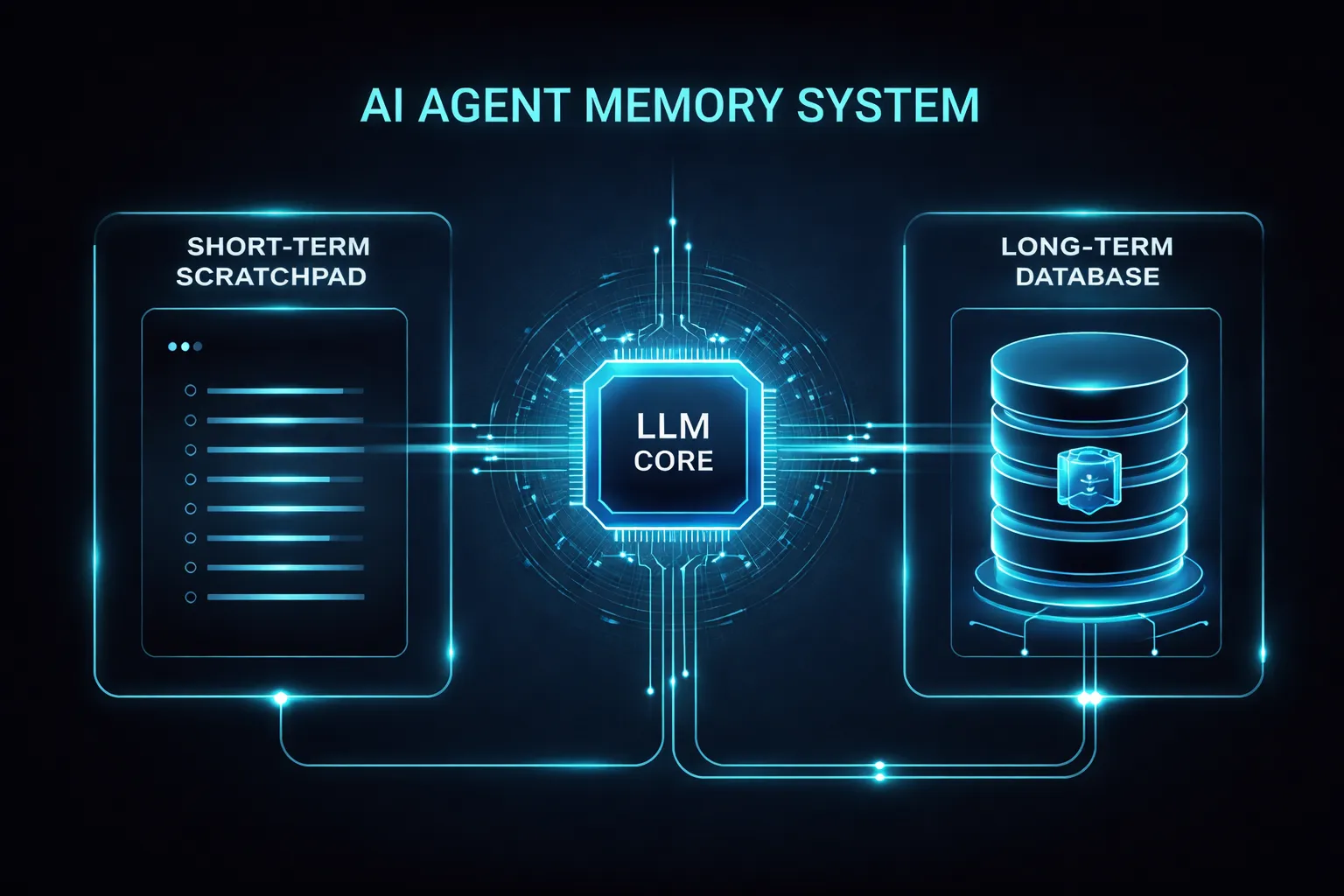

State Management & Memory

Agents need memory to function over multiple steps. This is divided into Short-term Memory (the scratchpad of the current task) and Long-term Memory (a database of past interactions and facts).

State management allows an agent to pause, wait for a slow tool or user approval, and resume exactly where it left off without starting over.

Multi-Agent Orchestration

One agent cannot do everything perfectly. Multi-Agent Orchestration involves a network of specialized agents.

A "Supervisor" agent breaks down the task and routes pieces to specialized "Worker" agents (e.g., a Coder agent, a Researcher agent, a Reviewer agent). They collaborate, critique each other, and merge their outputs.

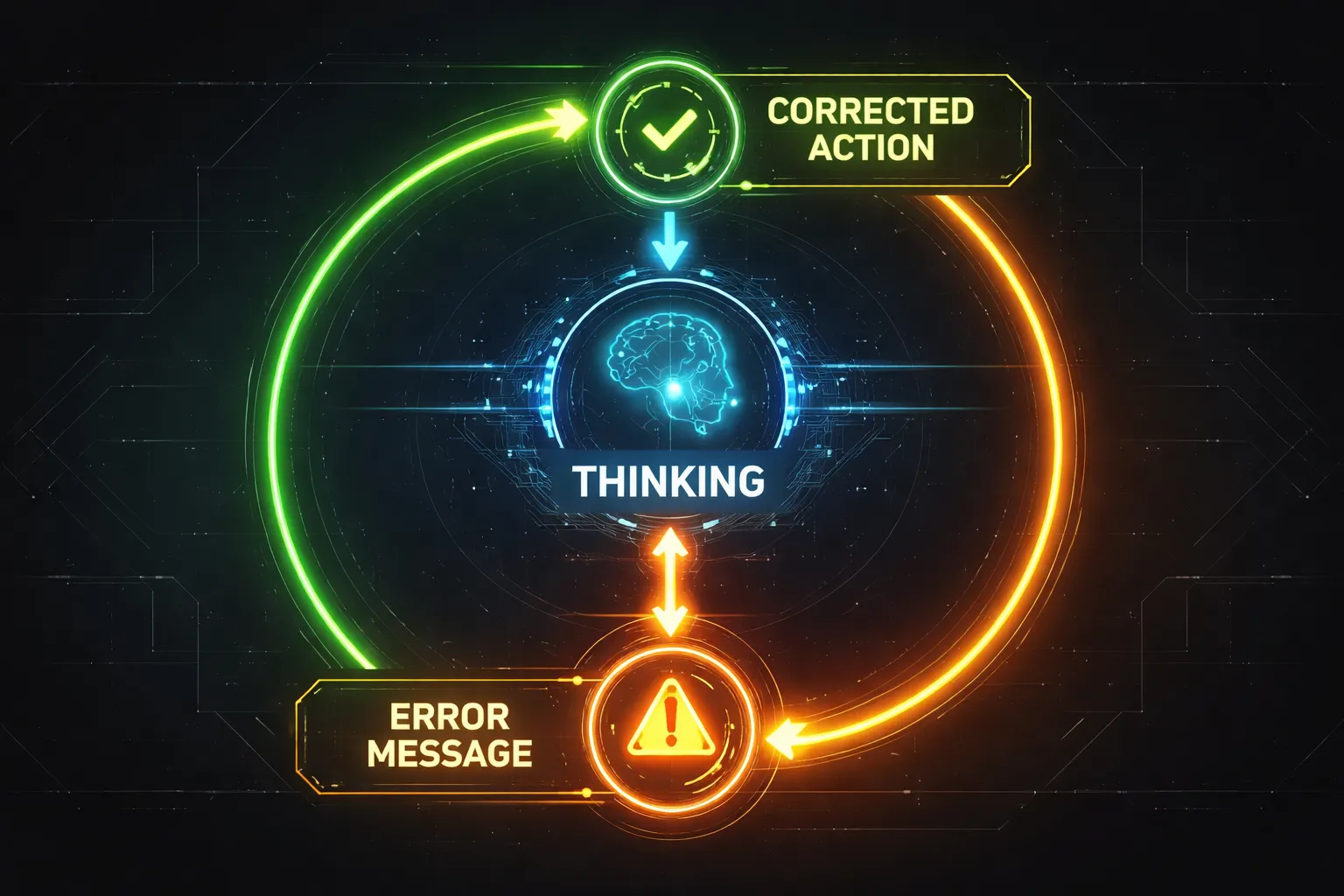

Handling Failure (Self-Correction)

The real world is messy. Tools fail, APIs timeout, and queries return empty. Self-Correction is a hallmark of advanced agents.

When an agent observes an error from a tool, it uses its reasoning engine to analyze the failure, adjust its parameters, and try a different approach instead of crashing.

Knowledge Check

Which concept allows an agent to recover from API failures or empty tool results?

Assessment Starts Now

You are about to begin the final assessment. You will be tested on the concepts of ReAct loops, function calling, state management, and multi-agent systems.

Ensure you are ready. You must score at least 80% to earn your certificate.

Assessment

1. What is the primary difference between a traditional LLM and an Agent LLM?

Assessment

2. In the ReAct architecture, which phase involves interpreting the output of a tool?

Assessment

3. What is the purpose of a "Supervisor" in a multi-agent orchestration setup?

Assessment

4. How does an LLM typically interface with an external API?